Measuring Acidity

Any time that a measurement can vary over many orders of magnitude, that's a candidate

for using a log scale.

Any time that a measurement can vary over many orders of magnitude, that's a candidate

for using a log scale.

One example is the pH scale, which shows the concentration of hydrogen ions [H+] in a water-based solution. The concentration can be as high as 1 mole of H+ for every 10 L of water (extremely acidic), or as low as 1 mole of H+ for every 100,000,000,000,000 L of water (extremely basic). For a refresher on describing chemical quantities in moles, see the MathBench module on Calculating Molar Weight.

Instead of counting out the zeros every time, we use a log scale. pH is defined as the negative log of the H+ concentration.

extremely acidic : [H+] = 0.1 moles/L :

pH = −log(0.1) = 1

extremely basic : [H+] = 0.00000000000001 moles/L :

pH = −log(0.00000000000001) = 14

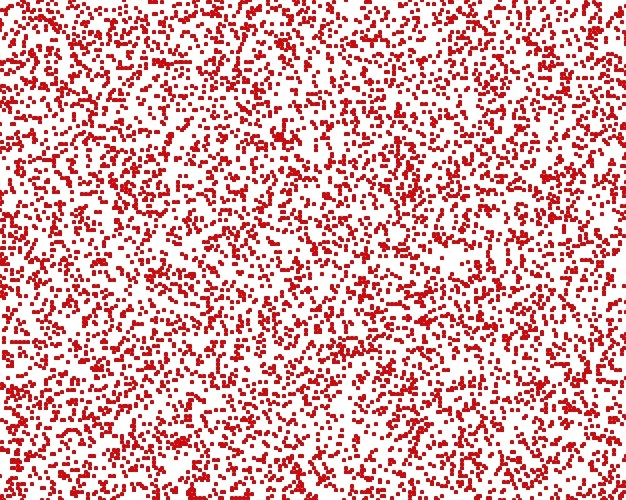

Imagine that the space below shows a very tiny quantity of water (9 x 10-15 liters, to be exact). Clicking on the buttons will show you the visual representation of pH 1 (super acidic) through 5 (still slightly acidic).

Visualizing pH...

Show me pH =

Concentration of H+ is ?

log(?) = ?

As a general rule, when you add 1 to a log number, it's the same as multiplying the unlogged measurement by 10:

1 on a log scale corresponds to 10 on an arithmetic (linear) scale

2 on a log scale corresponds to 100 on an arithmetic (linear) scale

3 on a log scale corresponds to 1000 on an arithmetic (linear) scale

and so on...

It works a little differently with pH, because pH is the negative log of concentration. So, every time we SUBTRACT a single pH unit (like going from 2 to 1), we multiply H+ by 10.

Unfortunately, we can't get any less acidic than pH=5 on the applet above, because we'd have to make the screen huge!! To get a pH of 14, we'd need the screen to be more than 10,000 times taller and 10,000 times wider, and this humongous screen would contain only a single dot.

Copyright University of Maryland, 2007

You may link to this site for educational purposes.

Please do not copy without permission

requests/questions/feedback email: mathbench@umd.edu